We’ve spent a couple of years wasting time with chatbots that are only good for chatting or writing code that you eventually have to refactor by hand. Honestly, I’m tired of it.

The real revolution isn’t an AI explaining what a foreach is; it’s an AI that can enter my CRM, identify an unpaid invoice, and manage the collection process without me lifting a finger. That is an Agent. That is pure execution, and that’s why I’ve spent the last few days wrestling with OpenClaw.

We aren’t looking at another ChatGPT “wrapper” designed to make the interface look pretty. OpenClaw is an engine built to actually do things: it connects to tools, executes scripts, and browses the web. But of course, this is where we hit the usual wall: where does the brain of this beast live?

My Red Line: Data Does Not Leave My Network

I could connect OpenClaw to the OpenAI API and everything would run smoothly. It would be fast, efficient, and appear very intelligent. But not a chance.

As an engineer, I have a non-negotiable red line: if I’m going to give an agent access to my files, my calendar, or my terminal, that agent cannot live on a server in California. I am not handing over the keys to my systems or my clients’ privacy to a company that will use that data to feed its next model.

So, I’ve chosen the hard path: Local-First by default.

Then Comes the Harsh Reality: RAM and Fans Ready for Ignition

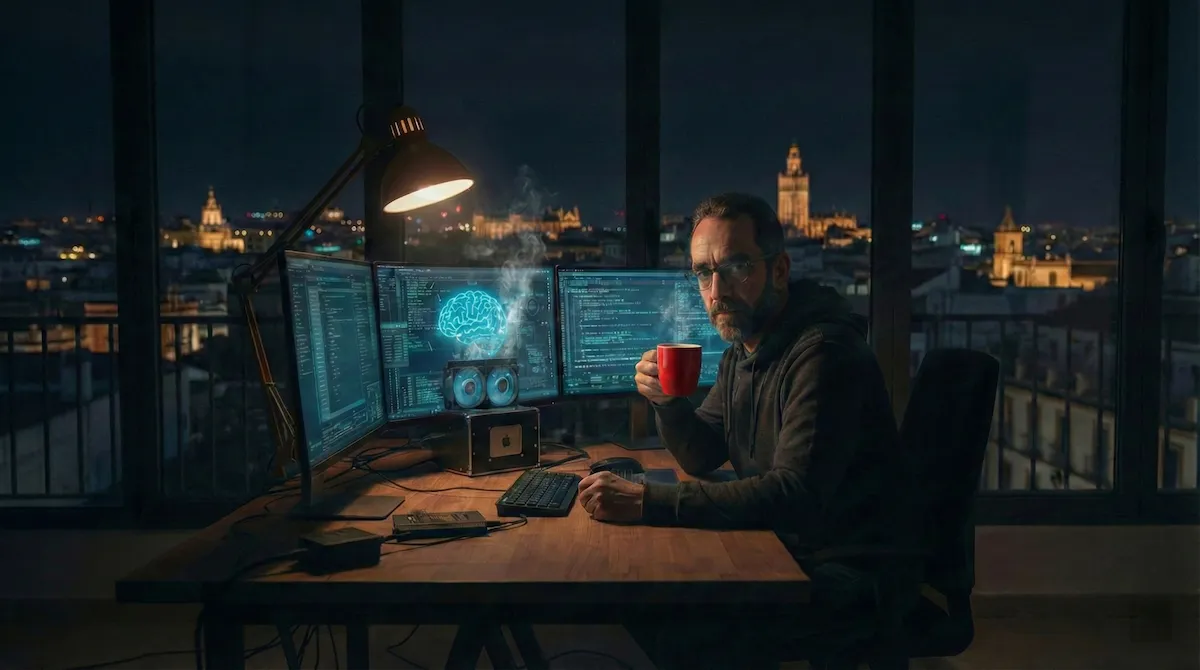

The theory of “Digital Sovereignty” looks very professional on paper, but in practice, my Mac is about to enter orbit. Running OpenClaw in a Docker container orchestrated with a local LLM (via Ollama) is a reality check for today’s hardware. We are trying to squeeze the capacity of a data center into a laptop, and naturally, things get complicated.

If you want complex reasoning, you need a model with enough parameters. And if you want it locally, get ready to set aside 40GB of RAM just for the AI to start functioning. The result? Fans at full throttle and latency that takes me back to the 56k modem era. Right now, local AI is either “dim-witted” or runs at a snail’s pace. Sometimes, both.

Why Do I Complicate My Life?

So, why do I persist if the performance is poor and the machine is burning up? Because it is sovereignty.

By running OpenClaw against a local model, privacy stops being a legal term and becomes a technical reality. I can ask it to analyze confidential contracts or raw databases—like an 8GB one I’m processing right now—with the peace of mind that I could cut the fiber optic cable and the system would keep working.

Besides, there are no more surprises on the credit card for token consumption; here I only pay for the electricity and I have total control of the stack. If OpenAI decides they don’t like me tomorrow or they change their policies, my agent stays right where it is, working for me.

I’d rather invest in hardware and fight with network configurations, Docker, integrations, and the like, than rent someone else’s intelligence for this kind of data. OpenClaw is a promising tool because it allows us to automate without sacrificing privacy, but hardware is the great bottleneck.

Useful AI will be private, or it simply won’t be useful for a professional. In the meantime, if you hear a sound like a Boeing 747 taking off near Seville, don’t worry: it’s just my Mac trying to reason without having to ask anyone for permission.